Hello!

In the ChemBERTA article (https://arxiv.org/pdf/2010.09885.pdf), the authors used canonized SMILES (unique SMILES), which makes sense, so one molecule exactly has one representation.

I was just wondering, would it be possible to train the RoBERTA on a dataset with non-canonical SMILES (generic SMILES)? This would come in really handy because we might be able to use some kind of data augmentation using the generic smiles.

Here is what I mean:

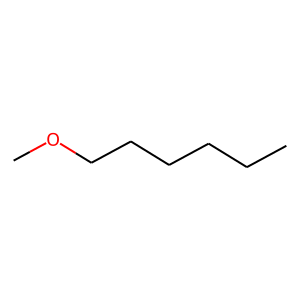

this molecule (1-methoxyhexane) with unique SMILES:

- CCCCCCOC

the same molecule with generic SMILES:

- CCCCCCOC

- C©CCCCOC

- C(CC)CCCOC

- C(CCC)CCOC

- C(CCCC)COC

- C(CCCCC)OC

- O(CCCCCC)C

- COCCCCCC

This makes sense if we imagine that each atom gets a unique numbering in rdkit (here from 0 to 7 because we have 8 atoms). So we have a permutation of 8, but it has 7 carbon atoms so we can formulate 8!/7!=8 different unique smiles.

Note 1: the first SMILES of the canonical and non-canonical are the same.

Note 2 : at this point, I am not sure if palindrome (like “never odd or even”) would cause any problem, tho). For example, number 1 and 8 are palindromes.

If we include molecules with rings, the calculations become a little bit harder but manageable.

There are some articles about this:

but looking at the code (https://github.com/EBjerrum/molvecgen), it does not use any complex augmentation, just shuffles the molecules and then regenerates the smiles. I think this can cause some serious duplications in the dataset, especially with small molecules (like toluene or so).

Another article:

https://jcheminf.biomedcentral.com/articles/10.1186/s13321-019-0393-0

I was thinking of pre-training the RoBERTA with an already augmented dataset (for example, we generate 5-10 SMILES variants for each molecules from the ZINC dataset) then that can be used just as ChemBERTA in the tutorial, but with generic (non-canonical) SMILES. And eventually, if we have a small dataset, it might be possible to implement some kind of SMILES augmentation to build a larger network and reach better performance (similar to CV).

Would love to hear from the community about this.